Testing software is a science all on its own. At Imint, it can be even more complicated, not only because we are very rigorous – so are other companies – but because of the hardware involved. We need to test on many different devices from different manufacturers, using different operating systems, chipsets, CPU architectures and other parameters.

That requires a lot of preparation, especially for the integration tests when all components are supposed to come together. We care deeply about variables like battery time and heat dissipation. Both are closely monitored and related: hotter temperatures mean using more energy and worse performance. You could say we put much effort into being “cool”.

How we make the video quality testing process more efficient

When testing our Vidhance video enhancement software, other software on the device isn’t necessarily reliable, especially during the early development cycle. To decrease dependencies and possible crashes, we use newly-flashed devices with only the bare minimum installed. Building Android from source can require upwards of 100 GB of disk space (although the end result is much smaller) and a fair bit of time, just for one of many versions.

We found a clever way of managing such sizes efficiently. All required versions are stored on a computer with plenty of disk space, with as much as possible precompiled, and with relevant parts ready to be replaced with Vidhance code. If these were moved to a new computer later, the edit dates would change, and the build system would think everything changed and rebuild everything from scratch.

To avoid this, code is stored in a light-weight virtual machine called a docker container with its own emulated file system. The fake file system fools the build system and only the new parts are built before assembling the build. The docker image also ensures the build environment is the same every time. This is fast and efficient.

No cloud, no problem

Testing, and evaluation of your video quality testing procedures, are continuous processes. It’s a constant trade-off between time vs. the granularity of your bug-catching net. The later a problem is discovered, the more expensive and stressful it becomes. All the software testing we have talked about here is done locally on our premises. However, some tuning and calibration can be performed on location on the customer’s premises depending on the feature and our agreements.

Some of the automatic tests can certainly be performed in the cloud, but with our needs for specialized on-demand devices and GPUs, it’s harder to find solutions. Services like AWS Device Farm enable app testing, but we often want to reprogram the lower levels of the device itself, and mostly on phones not yet released. No such service is known to us (maybe this could be a business idea for someone?)

In any case, it’s no problem for us, as the bottleneck is usually the tests’ runtime on the phone, and the cloud simultaneously opens up a new attack vector in terms of IT security, which is why we haven’t pursued this venue.

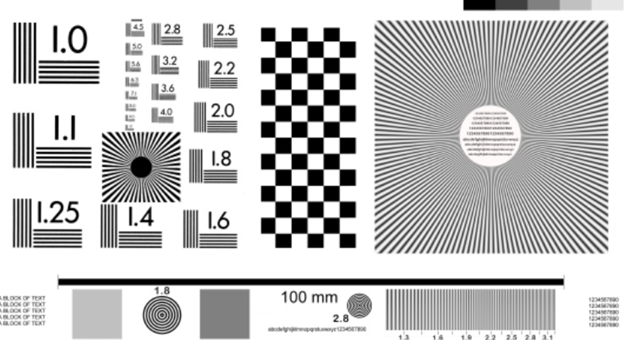

Seeing is believing

Want to see with your own eyes how much we can improve video quality, save on battery consumption or fine-tune auto zoom and object tracking in Live Composer? Contact us to book a demo of Vidhance and discuss testing and other parts of the process of integrating video stabilization with our video quality experts. For inspiration, insights and best practices for the next generation of video enhancement, enter your email address and subscribe to our newsletter.